Scientists shouldn't be punished for being wrong

"How can we design experiments that work if we don’t tell each other what doesn’t?"

Anyone who works in research can recount with reimagined sorrow the nights they spent at the lab bench, desperately trying to get results for a presentation the next morning. I remember sitting in the basement of the chemistry building here at the University of Toronto, way past witching hour, injecting sample after sample into one of our instruments.

When the results finally came out, they showed that my experiment had worked – the months of chemical synthesis needed to set it up had been worth it. The next morning, I triumphantly showed the results to my supervisor. He too was excited about what this meant for my project. Neither of us scrutinized the results, because it was what we wanted to see. Don’t look a gift horse in the mouth, right?

But not questioning results because they’re what you want to see is the first step down a slippery slope. When I checked the data again, I’d mistyped a data point – the results weren’t as spectacular as I’d thought. Here, the error was caught early, but the temptation to ignore mistakes becomes greater as the incorrect result becomes the starting point for subsequent research.

No one wants to talk about this in the science community. We’re scientists; of course we check all our results! We’d always announce a correction if we discovered an error in published work, obviously. But the stark truth is that researchers – like everyone else – don’t like admitting that we’re wrong. And the ‘publish or perish’ culture – i.e. the link between the amount of papers a scientist publishes and career success – has created a motivation for researchers to prioritize quantity over quality. Furthermore, many funding grants are given for a specific research goal, meaning scientists have very little room to maneuver after finding out that a hypothesis is wrong.

I would argue that the pressure to publish can put researchers between a rock and a hard place: either overlook errors or face having your funding slashed. While there is no excuse for dishonesty in science, it’s reproachable that the current system of academic science funding leaves a door open for scientists to cherry-pick results to secure funding, instead of getting funding for whatever they actually find.

Jonathan Baell

Some scientists, including Jonathan Baell at Monash University in Melbourne, Australia, are taking a stand against this kind of shoddy research. A medicinal chemist, Baell develops new molecules in the hope of finding better drugs. It's an area where scientific integrity is of paramount importance.

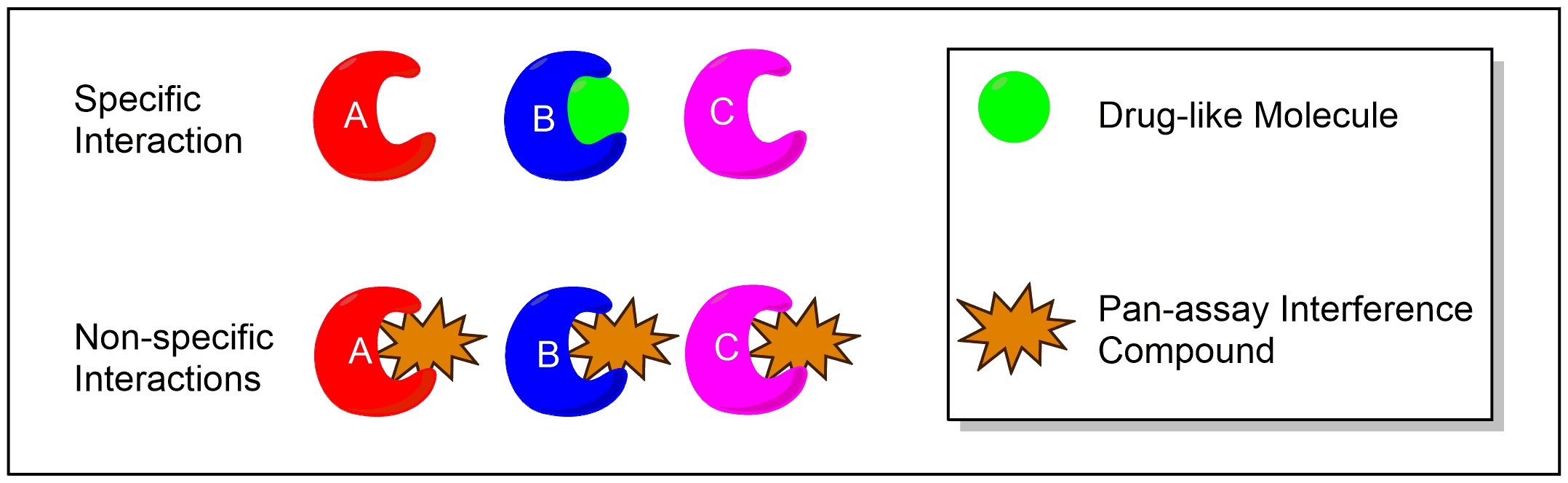

A key concept in drug discovery is the "screen": Researchers take a protein that they think is overactive in a certain disease and test a huge library of compounds to see whether any of them inhibit the protein’s activity. Any ‘hits’ become starting points from which to tailor molecular structure and eventually generate a drug that turns off this rogue protein in patients.

But many hits are false positives – that is, they show up as inhibitors, even though they're not. The error can come from many sources: experimental design, compound impurities, or even human slip-ups. But the point is that they’re mistakes that slip through because they’re what we wanted to see.

Rather than specifically interacting with one biological target, like the green drug candidate, PAINS compounds interact non-specifically with multiple biological targets.

About a decade ago, Baell and his coworkers noticed a pattern in the type of compounds that kept showing up as false positives. They used that information to create several red-flag categories for any drug candidate, termed PAINS (Pan Assay Interfering Compounds). To their surprise, labs worldwide were still publishing molecules that Baell would class as PAINS. Remember ‘publish or perish’? This is when it’s dangerous.

The growing prevalence of PAINS in the literature, and his own lab’s frustration with false positives, led Baell to write a paper in 2010 about the kinds of structures medicinal chemists should avoid in their search for new drugs. The paper is widely cited, and four years later he co-authored an opinion piece in Nature delving deeper into the PAINS pandemic.

Despite Baell’s best efforts, false positives still become published science. One study estimates a 50/50 chance that any given compound will interact with more than one biological target, despite most ‘hits’ being proclaimed as having a specific action.

This is not to say that these compounds will end up in patients. Drugs with off-target effects or poor efficacy will fail in preclinical (animal) or clinical (human) trials. But thousands of person hours, millions of dollars, and hundreds of animal lives will be sacrificed by the time the mistake is caught.

If the system were changed and scientists were not blindly rewarded on any 'positive' result, I imagine a much more stable, integral, and honest body of knowledge being built. Publishing all our findings is the key to open science – even if we think they’re inconsequential.

“How are we to judge what may become consequential in an unexpected fashion?” Baell observed in an email to me. This muzzling of negative results, and the overselling of mediocre ones, can drown out the true breakthroughs in science.

We as scientists need to be more open about our flaws and our failures. Progress and successful results are, of course, mandatory, but how can we design experiments that do work if we don’t tell each other what doesn’t?

We shouldn't be penalized for disproving a hypothesis for which we secured funding. Mostly, we need the public and funding agencies to understand that while we work tirelessly towards progress, sometimes the meandering path – the honest, methodical, unglamorous path – is the road to the deepest breakthroughs.